Limit the total number of pages to crawl: with this option, we will limit the number of pages crawled by the “ spider.”.If we want our spider to ignore that file and inspect all areas of the website, we need to check this option.

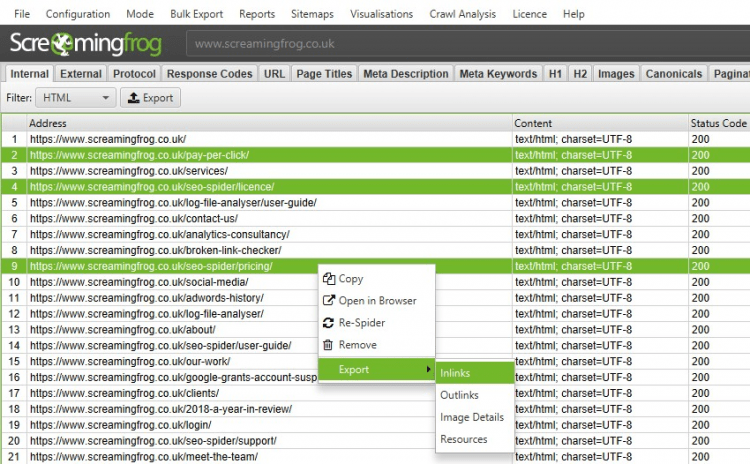

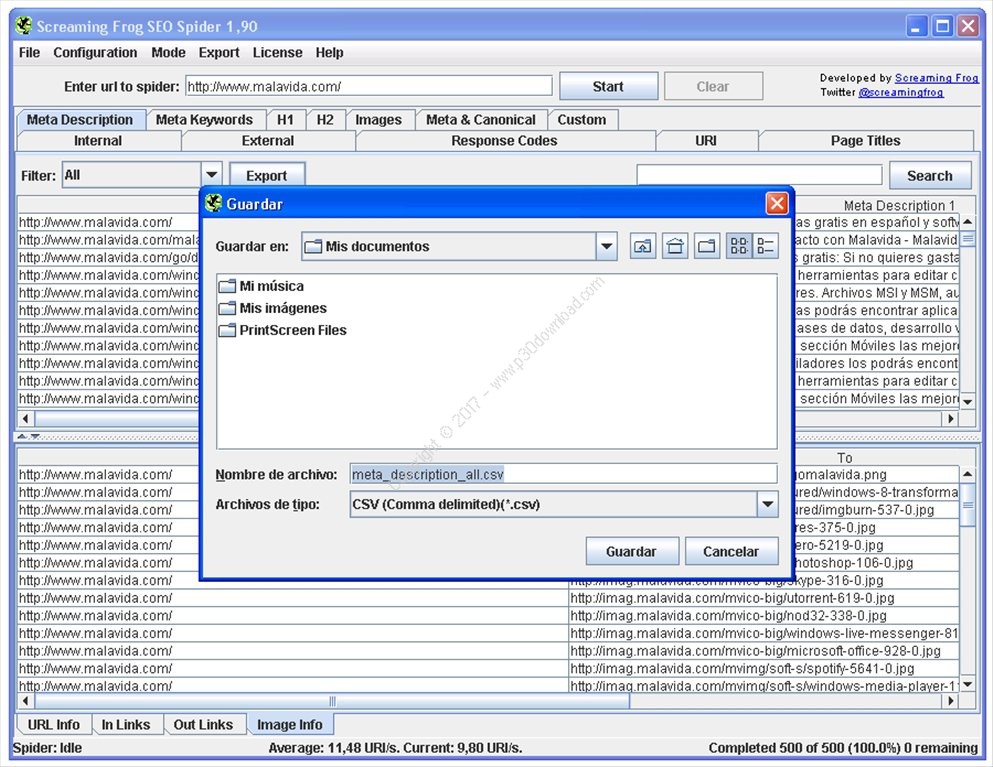

Ignore robots.txt file: if we have blocked certain areas of our site by using the file robots.txt.Crawl subdomains: if our site has multiple subdomains and wants to “ spider” it, we need to check this option.This option is very useful if we want to know the total number of “ dofollow” pages our site contains. Follow “nofollow” internal or external links: with this option, the spider will follow “ nofollow” links or ignore them.Check External Links: if our site has links to other sites, this option will check that these links are not broken.Check Images, CSS, Javascript, SWF: this option enables the program to check files, CSS, JS, etc, on the web pages, reporting any broken link found.Some of the options that we can set are the following: The first thing we must do is set the “spider” (this is how the name of the program will visit and collect information from the web pages that make up the site). After a minute or hours, depending on the size and depth of the site, we will get a report with useful information that we can filter and rearrange to look at possible failures or errors in the website. The program is easy to use we only need to set some basic settings and input the site's URL that we want to analyze. Screaming Frog SEO Spider is an ideal tool to analyze and report website problems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed